mycat高可用配置与集群

1. mycat 节点采用读写分离

MyCAT的读写分离机制如下:

- 事务内的SQL,默认走写节点,以注释/*balance*/开头,则会根据balance=“1” 或“2” 去获取 .

- 自动提交的select语句会走读节点,并在所有可用读节点中间随机负载均衡,默认根据balance=“1” 或“2” 去获取,以注释/*balance*/开头则会走写,解决部分已经开启读写分离,但是需要强一致性数据实时获取数据的场景走写

- 当某个主节点宕机,则其全部读节点都不再被使用,因为此时,同步失败,数据已经不是最新的,MYCAT会采用另外一个主节点所对应的全部读节点来实现select负载均衡。

- 当所有主节点都失败,则为了系统高可用性,自动提交的所有select语句仍将提交到全部存活的读节点上执行,此时系统的很多页面还是能出来数据,只是用户修改或提交会失败。

MyCAT的读写分离的配置如下:

<dataHost name="testhost" maxCon="1000" minCon="10" balance="1" writeType="0" dbType="mysql" dbDriver="native">

<heartbeat>select user()</heartbeat>

<!-- can have multi write hosts -->

<writeHost host="hostM1" url="localhost:3306" user="root" password="">

<readHost host="hostM2" url="10.18.96.133:3306" user="test" password="test" />

</writeHost>

</dataHost>

dataHost的balance属性设置为:

- 0,不开启读写分离机制

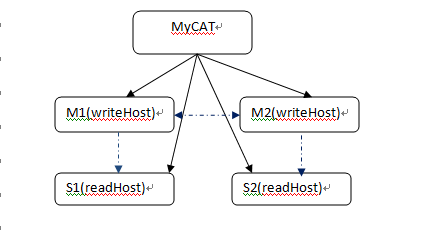

- 1,全部的readHost与stand by writeHost参与select语句的负载均衡,简单的说,当双主双从模式(M1->S1,M2->S2,并且M1与M2互为主备),正常情况下,M2,S1,S2都参与select语句的负载均衡。

- 2,所有的readHost与writeHost都参与select语句的负载均衡,也就是说,当系统的写操作压力不大的情况下,所有主机都可以承担负载均衡。

一个dataHost元素,表明进行了数据同步的一组数据库,DBA需要保证这一组数据库服务器是进行了数据同步复制的。writeHost相当于Master DB Server,而旗下的readHost则是与从数据库同步的Slave DB Server。当dataHost配置了多个writeHost的时候,任何一个writeHost宕机,Mycat都会自动检测出来,并尝试切换到下一个可用的writeHost。

注意:mycat并不负责读写之间的数据同步,需要自己开启数据库级别的读写同步。

2. mycat 节点采用多主多从

a. 后端数据库配置为一主多从,并开启读写分离机制

b. 后端数据库配置为双主双从(多从),并开启读写分离机制.

c. 后端数据库配置为多主多从,并开启读写分离机制

后面两种配置,具有更高的系统可用性,当其中一个写节点(主节点)失败后,Mycat会侦测出来(心跳机制)并自动切换到下一个写节点,MyCAT在任何时候,只会往一个写节点写数据。

下面是典型的双主双从的Mysql集群配置:

多主的配置方式为在host中配置多个writerhost,mycat再执行时候会默认根据配置文件的主机顺序获取写节点执行,如果,心跳检测到当前写不可用,会切换当前写到下一个。

dataHost的writeType属性设置为:

writeType=0 默认配置。

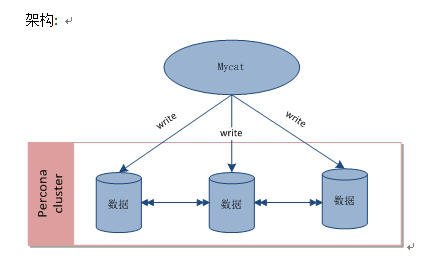

writeType=1 代表配置多主,mycat会往所有写节点,随机写数据,但是每次只会写入一个节点,此模式下无读节点,节点之间开启数据库级别同步。

此配置为mysql高级级别使用,因为 多主会带来数据库同步问题。

<dataHost name="localhost1" maxCon="1000" minCon="10" balance="0" writeType="1" dbType="mysql" dbDriver="native"> <heartbeat>select user()</heartbeat> <writeHost host="hostM1" url="localhost:3306" user="root" password="123456" /> <writeHost host="hostM2" url="localhost:3317" user="root" password="123456" /> <writeHost host="hostM3" url="localhost:3319" user="root" password="123456" /> </dataHost>

Log4j.xml中配置日志输出级别为debug时,当选择节点的时候,会输出如下日志:

16:37:21.660 DEBUG [Processor0-E3] (PhysicalDBPool.java:333) -select read source hostM1 for dataHost:localhost1

16:37:21.662 DEBUG [Processor0-E3] (PhysicalDBPool.java:333) -select read source hostM1 for dataHost:localhost1

根据这个信息,可以确定某个SQL发往了哪个读(写)节点,据此可以分析判断是否发生了读写分离。

注意:多个writeHost的作用是多主,一次只会写入一个。

而多个dataHost是分片,区分分片与多主的却别。

writeType=0 配置:

<?xml version="1.0"?>

<!DOCTYPE mycat:schema SYSTEM "schema.dtd">

<mycat:schema xmlns:mycat="http://org.opencloudb/">

<schema name="mycat" checkSQLschema="true" sqlMaxLimit="100">

<table name="t_user" primaryKey="user_id" dataNode="dn1,dn2" rule="rule1" />

<table name="t_node" primaryKey="vid" autoIncrement="true" dataNode="dn1,dn2" rule="rule1" />

<table name="t_area" type="global" primaryKey="ID" dataNode="dn1,dn2" />

</schema>

<dataNode name="dn1" dataHost="jdbchost" database="mycat_node1" />

<dataNode name="dn2" dataHost="jdbchost2" database="mycat_node1" />

<dataHost name="jdbchost" maxCon="500" minCon="10" balance="0" writeType="0" dbType="mysql" dbDriver="native">

<heartbeat>select 1</heartbeat>

<writeHost host="maste1" url="192.168.0.1:3306" user="root" password="root">

<!-- <readHost host="readshard" url="192.168.0.2:3306" user="root" password="root"/> -->

</writeHost>

<writeHost host="maste2" url="192.168.0.3:3306" user="root" password="root">

<!-- <readHost host="readshard" url="192.168.0.4:3306" user="root" password="root"/> -->

</writeHost>

</dataHost> <!-- -->

<dataHost name="jdbchost2" maxCon="500" minCon="10" balance="0" writeType="0" dbType="mysql" dbDriver="native">

<heartbeat>select 1</heartbeat>

<writeHost host="maste1" url="192.168.0.5:3306" user="root" password="root">

<!-- <readHost host="readshard" url="192.168.0.6:3306" user="root" password="root"/> -->

</writeHost>

<writeHost host="maste2" url="192.168.0.6:3307" user="root" password="root">

<!-- <readHost host="readshard" url="192.168.0.8:3306" user="root" password="root"/> -->

</writeHost>

</writeHost>

</dataHost>

</mycat:schema>

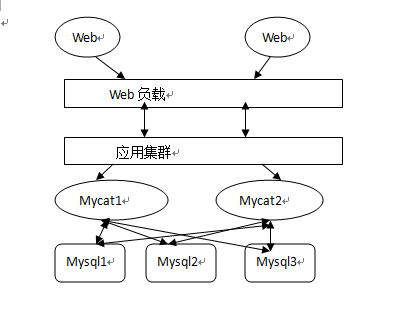

3. 多mycat节点集群

通过配置多个mycat 负载请求:

1. mycat 集群之间的负载可以通过硬件交换机进行请求转发负载。

2. mycat集群之间负载也可以通过软负载的方式例如:ha-jdbc,haproxy等在客户端处理。

目前mycat提供了mycat 里使用HAproxy来替换cobar里的cluster节点配置方式做高可用,

专门做负载的mycat-balance 仍旧在开发

HaProxy+keepalived+mycat集群高可用配置:

一下转自 武:http://blog.csdn.net/wdw1206/article/details/44201331

部署图

集群部署图的理解:

1、keepalived和haproxy必须装在同一台机器上(如172.17.210.210.83机器上,keepalived和haproxy都要安装),keepalived负责为该服务器抢占vip(虚拟ip),抢占到vip后,对该主机的访问可以通过原来的ip(172.17.210.210.83)访问,也可以直接通过vip(172.17.210.210.103)访问。

2、172.17.210.64上的keepalived也会去抢占vip,抢占vip时有优先级,配置keepalived.conf中的(priority 150 #数值愈大,优先级越高,172.17.210.64上改为120,master和slave上该值配置不同)决 定。但是一般哪台主机上的keepalived服务先启动就会抢占到vip,即使是slave,只要先启动也能抢到。

3、haproxy负责将对vip的请求分发到mycat上。起到负载均衡的作用,同时haproxy也能检测到mycat是否存活,haproxy只会将请求转发到存活的mycat上。

4、如果一台服务器(keepalived+haproxy服务器)宕机,另外一台上的keepalived会立刻抢占vip并接管服务。

如果一台mycat服务器宕机,haporxy转发时不会转发到宕机的mycat上,所以mycat依然可用。

Haproxy安装

|

useraddhaproxy

# tar zxvf haproxy-1.4.25.tar.gz

# cd haproxy-1.4.25

# make TARGET=linux26 PREFIX=/usr/local/haproxy ARCH=x86_64

# make install PREFIX=/usr/local/haproxy

#cd /usr/local/haproxy

#chown -R haproxy.haproxy *

|

haproxy.cfg

|

#cd /usr/local/haproxy

#touch haproxy.cfg

#vi/usr/local/haproxy/haproxy.cfg

global

log 127.0.0.1 local0 ##记日志的功能

maxconn 4096

chroot/usr/local/haproxy

user haproxy

group haproxy

daemon

defaults

log global

option dontlognull

retries 3

option redispatch

maxconn 2000

contimeout 5000

clitimeout 50000

srvtimeout 50000

listen admin_status 172.17.210.103:48800 ##VIP

stats uri/admin-status ##统计页面

stats auth admin:admin

mode http

option httplog

listen allmycat_service 172.17.210.103:8096 ##转发到mycat的8066端口,即mycat的服务端口

mode tcp

option tcplog

option httpchk OPTIONS * HTTP/1.1\r\nHost:\ www

balance roundrobin

server mycat_133 172.17.210.133:8066 check port 48700 inter 5s rise 2 fall 3

server mycat_134 172.17.210.134:8066 check port 48700 inter 5s rise 2 fall 3

srvtimeout 20000

listen allmycat_admin 172.17.210.103:8097 ##转发到mycat的9066端口,及mycat的管理控制台端口

mode tcp

option tcplog

option httpchk OPTIONS * HTTP/1.1\r\nHost:\ www

balance roundrobin

server mycat_133 172.17.210.133:9066 check port 48700 inter 5s rise 2 fall 3

server mycat_83 172.17.210.134:9066 check port 48700 inter 5s rise 2 fall 3

srvtimeout 20000

|

haproxy记录日志

默认haproxy是不记录日志的,为了记录日志还需要配置syslog模块,在linux下是rsyslogd服务,yum –y install rsyslog先安装rsyslog,然后

|

#cd /etc/rsyslog.d/

如果没有这个目录,新建

#cd /etc

#mkdir rsyslog.d

#cd /etc/rsyslog.d/

#touch haproxy.conf

#vi /etc/rsyslog.d/haproxy.conf

$ModLoad imudp

$UDPServerRun 514

local0.* /var/log/haproxy.log

#vi /etc/rsyslog.conf

1、在#### RULES ####上面一行的地方加入以下内容:

# Include all config files in /etc/rsyslog.d/

$IncludeConfig /etc/rsyslog.d/*.conf

#### RULES ####

2、在local7.* /var/log/boot.log的下面加入以下内容(增加后的效果如下):

# Save boot messages also to boot.log

local7.* /var/log/boot.log

local0.* /var/log/haproxy.log

|

保存,重启rsyslog服务

service rsyslog restart

现在你就可以看到日志(/var/log/haproxy.log)了

配置监听mycat是否存活

在Mycat server1 Mycat server2上都需要添加检测端口48700的脚本,为此需要用到xinetd,xinetd为linux系统的基础服务,

首先在xinetd目录下面增加脚本与端口的映射配置文件

1、如果xinetd没有安装,使用如下命令安装:

yum install xinetd -y

2、检查/etc/xinetd.conf的末尾是否有这一句:includedir /etc/xinetd.d

没有就加上,

3、检查 /etc/xinetd.d文件夹是否存在,不存在也加上

#cd /etc

#mkdir xinetd.d

4、增加 /etc/xinetd.d/mycat_status

|

#cd /etc

#mkdir xinetd.d

#cd /etc/xinetd.d/

#touch mycat_status

#vim /etc/xinetd.d/mycat_status

service mycat_status

{

flags = REUSE

socket_type = stream

port = 48700

wait = no

user = root

server =/usr/local/bin/mycat_status

log_on_failure += USERID

disable = no

}

|

5、/usr/local/bin/mycat_status脚本

|

#!/bin/bash

#/usr/local/bin/mycat_status.sh

# This script checks if a mycat server is healthy running on localhost. It will

# return:

#

# "HTTP/1.x 200 OK\r" (if mycat is running smoothly)

#

# "HTTP/1.x 503 Internal Server Error\r" (else)

mycat=`/usr/local/mycat/bin/mycatstatus | grep'not running' | wc -l`

if [ "$mycat" = "0" ];

then

/bin/echo-e "HTTP/1.1 200 OK\r\n"

else

/bin/echo-e "HTTP/1.1 503 Service Unavailable\r\n"

fi

|

4、/etc/services中加入mycat_status服务

|

#cd /etc

#vi services

在末尾加入

mycat_status 48700/tcp # mycat_status

保存

重启xinetd服务

service xinetd restart

|

5、验证mycat_status服务是否启动成功

|

#netstat -antup|grep 48700

如果成功会现实如下内容:

[root@localhost log]# netstat -antup|grep 48700

tcp 0 0 :::48700 :::* LISTEN 12609/xinetd

|

启动haproxy

启动haproxy前必须先启动keepalived,否则启动不了。

/usr/local/haproxy/sbin/haproxy -f /usr/local/haproxy/haproxy.cfg

启动haproxy异常情况

如果报以下错误:

[root@localhost bin]# /usr/local/haproxy/sbin/haproxy -f /usr/local/haproxy/haproxy.cfg

[ALERT] 183/115915 (12890) : Starting proxy admin_status: cannot bind socket

[ALERT] 183/115915 (12890) : Starting proxy allmycat_service: cannot bind socket

[ALERT] 183/115915 (12890) : Starting proxy allmycat_admin: cannot bind socket

原因为:该机器没有抢占到vip

为了使用方便可以增加一个启动,停止haproxy的脚本

启动脚本starthap内容如下

#!/bin/sh

/usr/local/haproxy/sbin/haproxy -f /usr/local/haproxy/haproxy.cfg &

停止脚本stophap内容如下

#!/bin/sh

ps -ef | grep sbin/haproxy | grep -v grep |awk '{print $2}'|xargs kill -s 9

分别赋予启动权限

chmod +x starthap

chmod +x stophap

启动后可以通过http://172.17.210.103:48800/admin-status (用户名密码都是admin,haproxy.cfg中配置的)

openssl安装

openssl必须安装,否则安装keepalived时无法编译,keepalived依赖openssl。

|

tar zxvf openssl-1.0.1g.tar.gz

./config--prefix=/usr/local/openssl

./config-t

make depend

make

make test

make install

ln -s /usr/local/openssl /usr/local/ssl

|

|

vi /etc/ld.so.conf

#在/etc/ld.so.conf文件的最后面,添加如下内容:

/usr/local/openssl/lib

vi /etc/profile

export OPENSSL=/usr/local/openssl/bin

export PATH=$PATH:$OPENSSL

source /etc/profile

yum installopenssl-devel -y #如无法yum下载安装,请修改yum配置文件

|

测试:

|

ldd /usr/local/openssl/bin/openssl

linux-vdso.so.1 => (0x00007fff996b9000)

libdl.so.2 =>/lib64/libdl.so.2 (0x00000030efc00000)

libc.so.6 =>/lib64/libc.so.6 (0x00000030f0000000)

/lib64/ld-linux-x86-64.so.2 (0x00000030ef800000)

which openssl

/usr/bin/openssl

openssl version

OpenSSL 1.0.0-fips 29 Mar 2010

|

keepalived安装

本文在172.17.30.64、172.17.30.83两台机器进行keepalived安装

|

tar zxvf keepalived-1.2.13.tar.gz

cd keepalived-1.2.13

./configure--prefix=/usr/local/keepalived

make

make install

cp /usr/local/keepalived/sbin/keepalived /usr/sbin/

cp /usr/local/keepalived/etc/sysconfig/keepalived /etc/sysconfig/

cp /usr/local/keepalived/etc/rc.d/init.d/keepalived/etc/init.d/

mkdir /etc/keepalived

cd /etc/keepalived/

cp /usr/local/keepalived/etc/keepalived/keepalived.conf/etc/keepalived

mkdir-p /usr/local/keepalived/var/log

|

keepalived配置

|

#新建redis检查

mkdir /etc/keepalived/scripts

cd /etc/keepalived/scripts

|

keepalived.conf:

vi /etc/keepalived/keepalived.conf

Master:

|

! Configuration Filefor keepalived

vrrp_script chk_http_port {

script"/etc/keepalived/scripts/check_haproxy.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

state MASTER #172.17.210.83上改为Master

interface eth0 #对外提供服务的网络接口

virtual_router_id 51 #VRRP组名,两个节点的设置必须一样,以指明各个节点属于同一VRRP组

priority 150 #数值愈大,优先级越高,172.17.210.84上改为120

advert_int 1 #同步通知间隔

authentication { #包含验证类型和验证密码。类型主要有PASS、AH两种,通常使用的类型为PASS,据说AH使用时有问题

auth_type PASS

auth_pass 1111

}

track_script {

chk_http_port #调用脚本check_haproxy.sh检查haproxy是否存活

}

virtual_ipaddress { #vip地址,这个ip必须与我们在lvs客户端设定的vip相一致

172.17.210.103 dev eth0 scope globa

}

notify_master/etc/keepalived/scripts/haproxy_master.sh

notify_backup/etc/keepalived/scripts/haproxy_backup.sh

notify_fault /etc/keepalived/scripts/haproxy_fault.sh

notify_stop /etc/keepalived/scripts/haproxy_stop.sh

}

|

slave:

|

! Configuration Filefor keepalived

vrrp_script chk_http_port {

script"/etc/keepalived/scripts/check_haproxy.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

state MASTER #172.17.210.83上改为Master

interface eth1 #对外提供服务的网络接口

virtual_router_id 51 #VRRP组名,两个节点的设置必须一样,以指明各个节点属于同一VRRP组

priority 120 #数值愈大,优先级越高,172.17.210.64上改为120

advert_int 1 #同步通知间隔

authentication { #包含验证类型和验证密码。类型主要有PASS、AH两种,通常使用的类型为PASS,据说AH使用时有问题

auth_type PASS

auth_pass 1111

}

track_script {

chk_http_port #调用脚本check_haproxy.sh检查haproxy是否存活

}

virtual_ipaddress { #vip地址,这个ip必须与我们在lvs客户端设定的vip相一致

172.17.210.103 dev eth1 scope globa

}

notify_master/etc/keepalived/scripts/haproxy_master.sh

notify_backup/etc/keepalived/scripts/haproxy_backup.sh

notify_fault /etc/keepalived/scripts/haproxy_fault.sh

notify_stop /etc/keepalived/scripts/haproxy_stop.sh

}

|

check_haproxy.sh

vi /etc/keepalived/scripts/check_haproxy.sh

脚本含义:如果没有haproxy进程存在,就启动haproxy,停止keepalived

|

#!/bin/bash

STARTHAPROXY="/usr/local/haproxy/sbin/haproxy -f /usr/local/haproxy/haproxy.cfg"

STOPKEEPALIVED="/etc/init.d/keepalived stop"

LOGFILE="/usr/local/keepalived/var/log/keepalived-haproxy-state.log"

echo "[check_haproxy status]" >> $LOGFILE

A=`ps-C haproxy --no-header |wc-l`

echo "[check_haproxy status]" >> $LOGFILE

date >> $LOGFILE

if [ $A -eq 0 ];then

echo $STARTHAPROXY >> $LOGFILE

$STARTHAPROXY >> $LOGFILE 2>&1

sleep5

fi

if [ `ps -C haproxy --no-header |wc-l` -eq 0 ];then

exit 0

else

exit 1

fi

|

haproxy_master.sh(master和slave一样)

|

#!/bin/bash

STARTHAPROXY=`/usr/local/haproxy/sbin/haproxy-f /usr/local/haproxy/haproxy.cfg`

STOPHAPROXY=`ps-ef | grep sbin/haproxy | grep -vgrep |awk'{print $2}'|xargskill -s 9`

LOGFILE="/usr/local/keepalived/var/log/keepalived-haproxy-state.log"

echo "[master]" >> $LOGFILE

date >> $LOGFILE

echo "Being master...." >> $LOGFILE 2>&1

echo "stop haproxy...." >> $LOGFILE 2>&1

$STOPHAPROXY >> $LOGFILE 2>&1

echo "start haproxy...." >> $LOGFILE 2>&1

$STARTHAPROXY >> $LOGFILE 2>&1

echo "haproxy stared ..." >> $LOGFILE

|

haproxy_backup.sh(master和slave一样)

|

#!/bin/bash

STARTHAPROXY=`/usr/local/haproxy/sbin/haproxy-f /usr/local/haproxy/haproxy.cfg`

STOPHAPROXY=`ps-ef | grep sbin/haproxy | grep -vgrep |awk'{print $2}'|xargskill -s 9`

LOGFILE="/usr/local/keepalived/var/log/keepalived-haproxy-state.log"

echo "[backup]" >> $LOGFILE

date >> $LOGFILE

echo "Being backup...." >> $LOGFILE 2>&1

echo "stop haproxy...." >> $LOGFILE 2>&1

$STOPHAPROXY >> $LOGFILE 2>&1

echo "start haproxy...." >> $LOGFILE 2>&1

$STARTHAPROXY >> $LOGFILE 2>&1

echo "haproxy stared ..." >> $LOGFILE

|

haproxy_fault.sh(master和slave一样)

|

#!/bin/bash

LOGFILE=/usr/local/keepalived/var/log/keepalived-haproxy-state.log

echo "[fault]" >> $LOGFILE

date >> $LOGFILE

|

haproxy_stop.sh(master和slave一样)

|

#!/bin/bash

LOGFILE=/usr/local/keepalived/var/log/keepalived-haproxy-state.log

echo "[stop]" >> $LOGFILE

date >> $LOGFILE

|

启用服务

|

#启用服务

service keepalived start

|